Data Lake Data Catalog

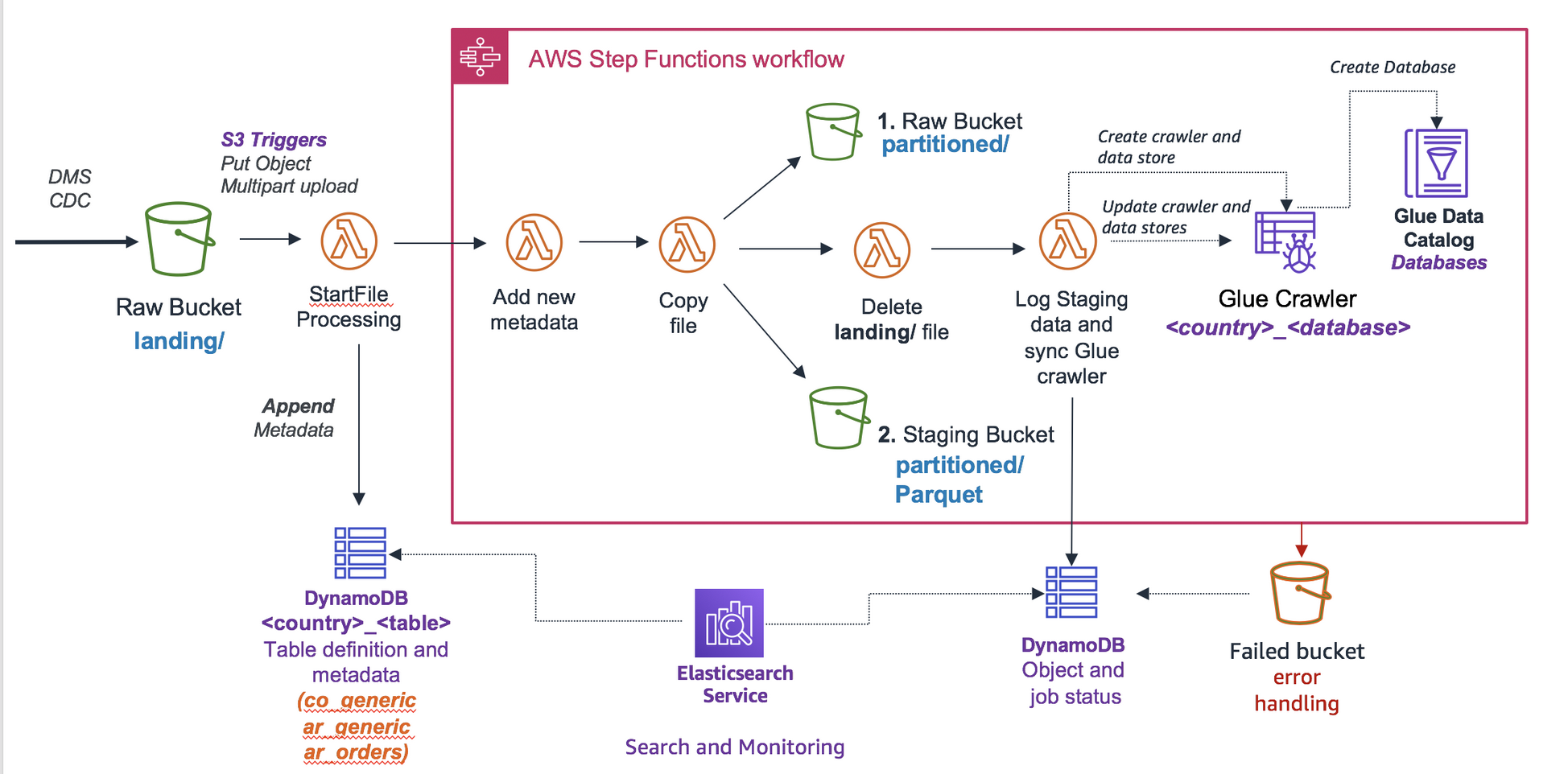

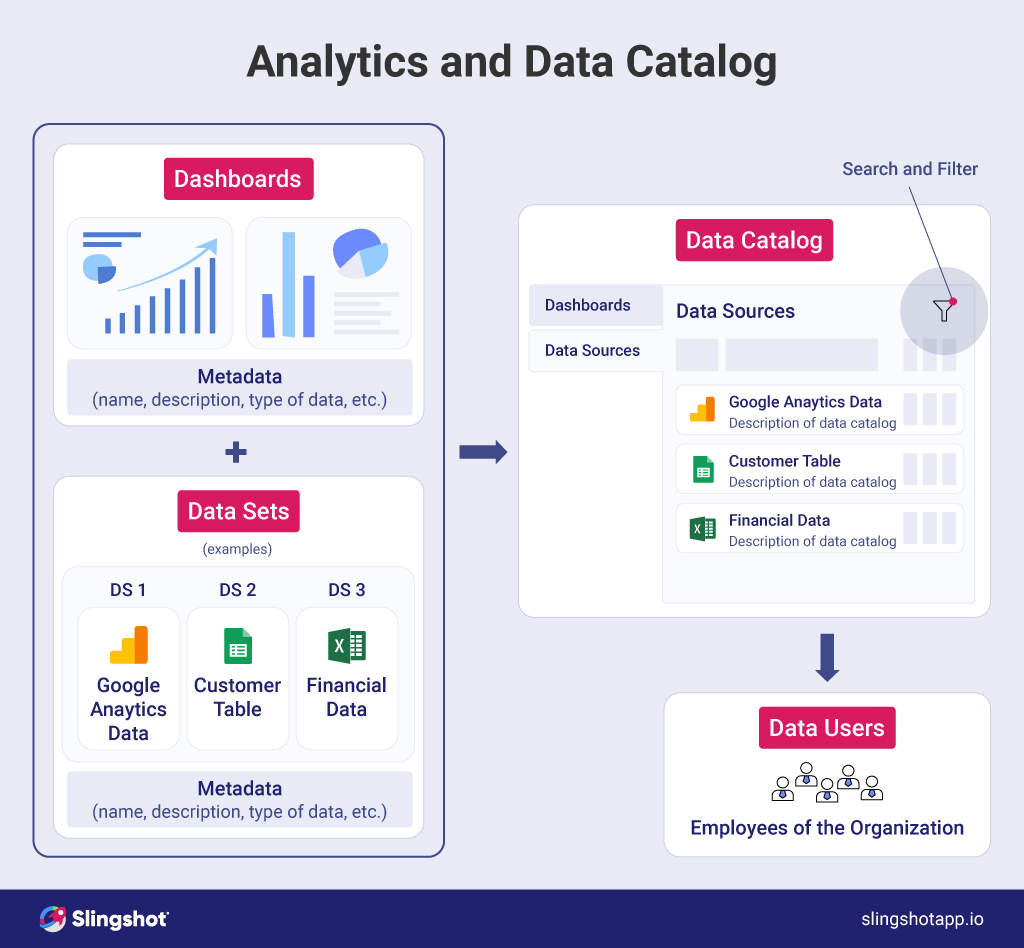

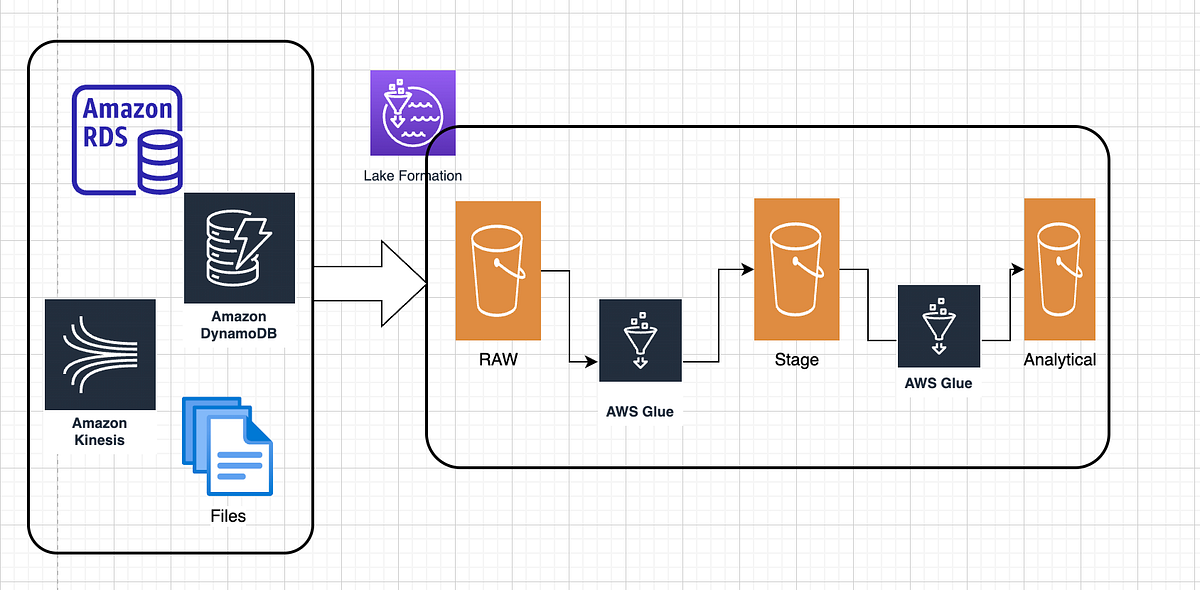

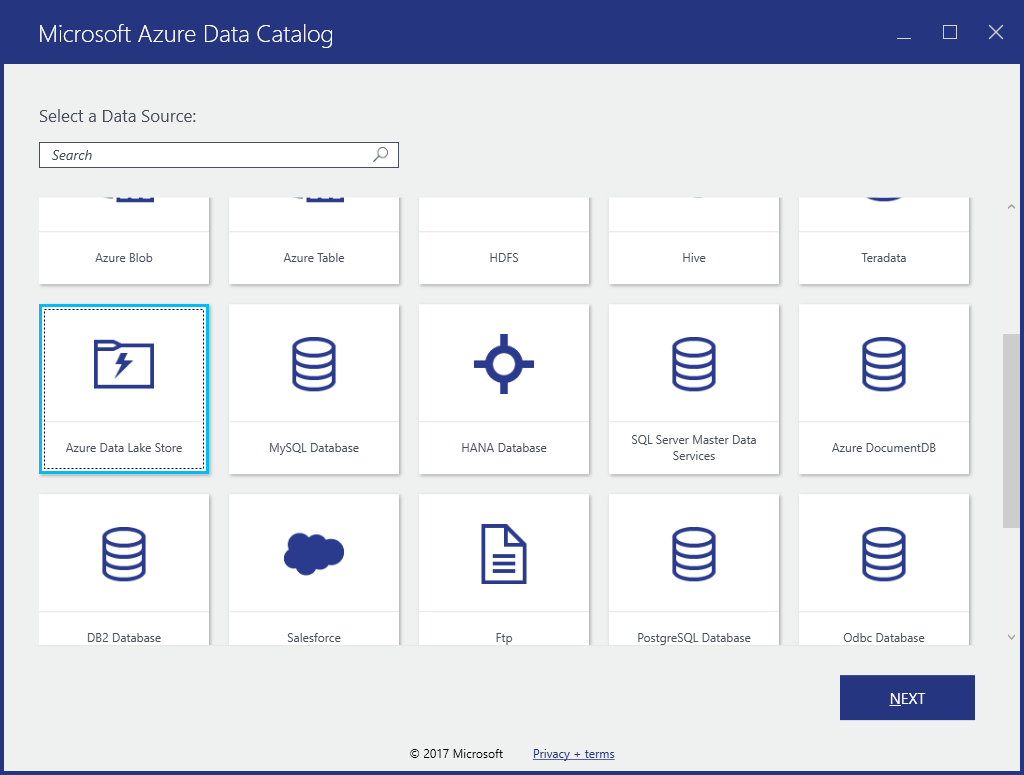

Data Lake Data Catalog - It is designed to provide an interface for easy discovery of data. A data catalog is an organized inventory of data assets. With the launch of sap business data cloud (bdc), the data catalog and the data marketplace tabs in sap datasphere are being consolidated under a single tab, called. What is a data catalog? Specifically, the product combines data cataloging, stream data capture, hadoop job management, security, and cloud connectors in a single unified product. It can store data in its native format and. And what does a catalog. Customers frequently ask, what exactly is a data lake? A data catalog is a detailed inventory that can help data professionals quickly find the most appropriate data for any analytical or business purpose. R2 data catalog is a managed apache iceberg ↗ data catalog built directly into your r2 bucket. Learn how implementing a data catalog can solve these problems. Using file name patterns and logical entities in oracle cloud infrastructure data catalog to understand data lakes better. And what does a catalog. Unlock the power of your data lakes with our comprehensive guide to data cataloging. It is designed to provide an interface for easy discovery of data. Data lakes contain several deficiencies and bring about data discovery, security, and governance problems. Data lakes have become essential tools for managing and analyzing vast amounts of data in the modern. With the launch of sap business data cloud (bdc), the data catalog and the data marketplace tabs in sap datasphere are being consolidated under a single tab, called. A data catalog is an organized inventory of data assets. It can store data in its native format and. What is a data catalog? A data lake is a centralized repository designed to store large amounts of structured, semistructured, and unstructured data. Data lakes have become essential tools for managing and analyzing vast amounts of data in the modern. Unlock the power of your data lakes with our comprehensive guide to data cataloging. A data catalog is an organized. A data catalog is a detailed inventory that can help data professionals quickly find the most appropriate data for any analytical or business purpose. Data lakes have become essential tools for managing and analyzing vast amounts of data in the modern. A data lake is a centralized repository designed to store large amounts of structured, semistructured, and unstructured data. Specifically,. It exposes a standard iceberg rest catalog interface, so you can connect the. What is a data catalog? In this edition, we look at data catalog, metadata, and search. Data lakes contain several deficiencies and bring about data discovery, security, and governance problems. Data lakes have become essential tools for managing and analyzing vast amounts of data in the modern. Customers frequently ask, what exactly is a data lake? Data catalogs help tackle these challenges to empower data lake users towards improving functionality: And what does a catalog. A data catalog is an organized inventory of data assets. 🏄 anyone can use a data lake, from data analysts and scientists to business users.however, to work with data lakes you need. And what does a catalog. Internally, an iceberg table is a collection of data files (typically stored in columnar formats like parquet or orc) and metadata files (typically stored in json or avro) that. It is designed to provide an interface for easy discovery of data. A data catalog is a detailed inventory that can help data professionals quickly find. Make data catalog seamless by integrating with. That’s like asking who swims in the ocean—literally anyone! Learn how implementing a data catalog can solve these problems. Customers frequently ask, what exactly is a data lake? 🏄 anyone can use a data lake, from data analysts and scientists to business users.however, to work with data lakes you need to be familiar. A data catalog is a detailed inventory that can help data professionals quickly find the most appropriate data for any analytical or business purpose. That’s like asking who swims in the ocean—literally anyone! It is designed to provide an interface for easy discovery of data. Big data enablementreduce security risksmitigate big data threats With the launch of sap business data. That’s like asking who swims in the ocean—literally anyone! And what does a catalog. Customers frequently ask, what exactly is a data lake? Data lakes contain several deficiencies and bring about data discovery, security, and governance problems. A data catalog contains information about all assets that have been ingested into or curated in the s3 data lake. Simplifies setting up, securing, and managing the data lake. Look to create a truly end to end data market place with a combination of specialized and enterprise data catalog. Automatically discovers, catalogs, and organizes data across s3. Data catalogs help tackle these challenges to empower data lake users towards improving functionality: R2 data catalog is a managed apache iceberg ↗. Learn how implementing a data catalog can solve these problems. It is designed to provide an interface for easy discovery of data. With the launch of sap business data cloud (bdc), the data catalog and the data marketplace tabs in sap datasphere are being consolidated under a single tab, called. Automatically discovers, catalogs, and organizes data across s3. Data catalogs. Any data lake design should incorporate a. Data catalogs help tackle these challenges to empower data lake users towards improving functionality: What is a data catalog? In this edition, we look at data catalog, metadata, and search. Big data enablementreduce security risksmitigate big data threats A data catalog contains information about all assets that have been ingested into or curated in the s3 data lake. A data catalog is an organized inventory of data assets. Data lakes have become essential tools for managing and analyzing vast amounts of data in the modern. Make data catalog seamless by integrating with. It is designed to provide an interface for easy discovery of data. Unlock the power of your data lakes with our comprehensive guide to data cataloging. Internally, an iceberg table is a collection of data files (typically stored in columnar formats like parquet or orc) and metadata files (typically stored in json or avro) that. A data lake is a centralized repository designed to store large amounts of structured, semistructured, and unstructured data. That’s like asking who swims in the ocean—literally anyone! 🏄 anyone can use a data lake, from data analysts and scientists to business users.however, to work with data lakes you need to be familiar with data processing and analysis techniques. Specifically, the product combines data cataloging, stream data capture, hadoop job management, security, and cloud connectors in a single unified product.Creating and hydrating selfservice data lakes with AWS Service Catalog

GitHub andresmaopal/datalakestagingengine S3 eventbased engine

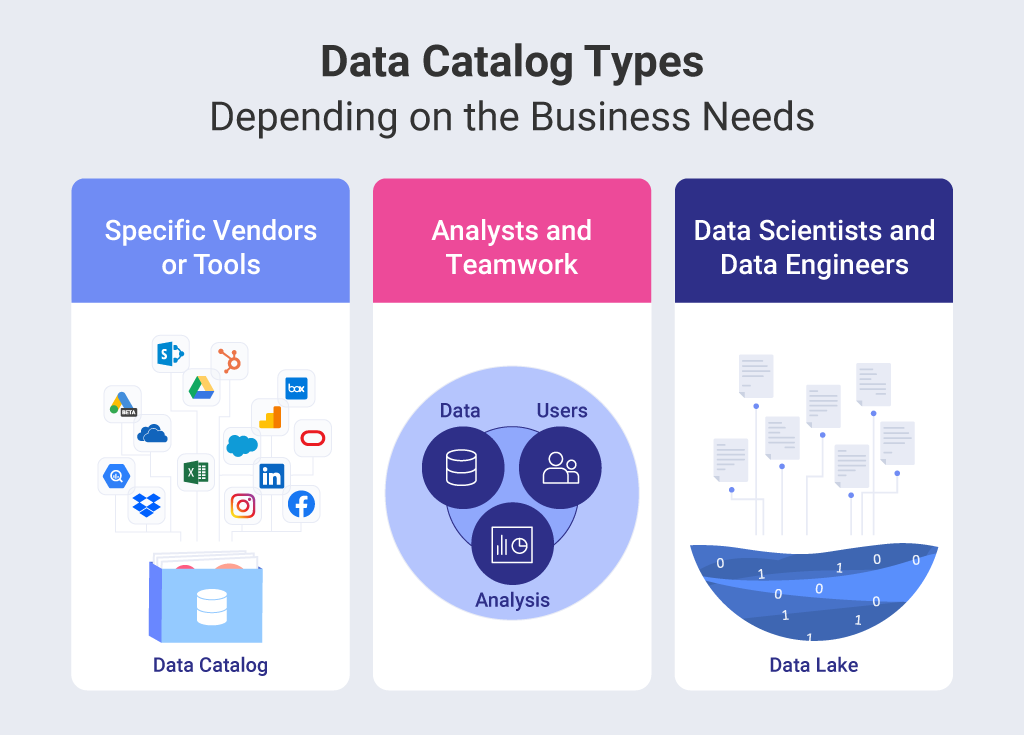

Data Catalog Vs Data Lake Catalog Library

Building Data Lake On AWS A StepbyStep Guide — Lake Formation, Glue

Integrate Data Lake Storage Gen1 with Azure Data Catalog Microsoft Learn

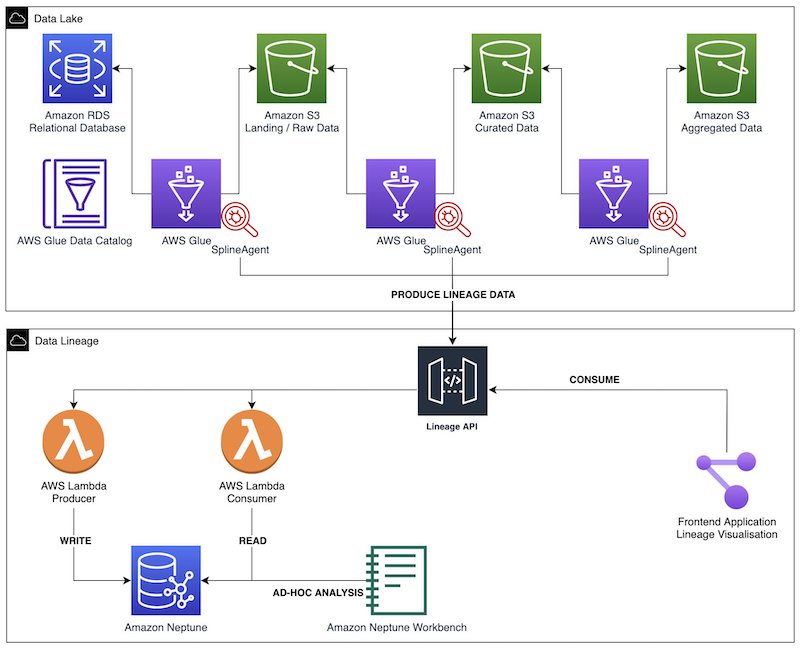

Build data lineage for data lakes using AWS Glue, Amazon Neptune, and

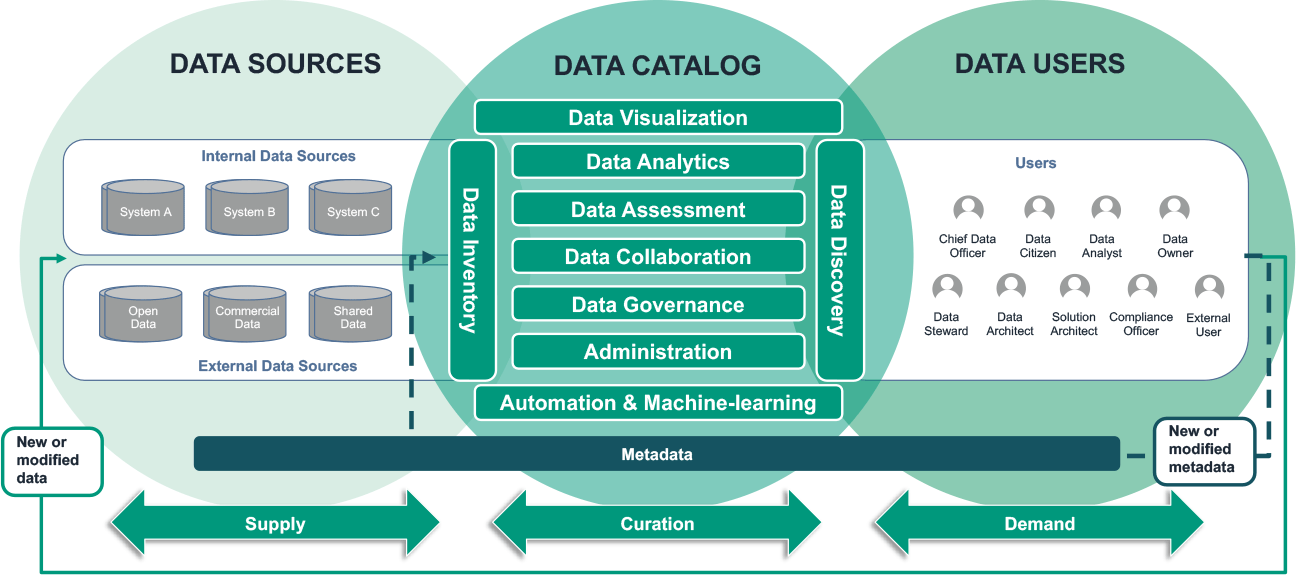

Layer architecture of the data catalog, provenance and access control

Data Catalog Vs Data Lake Catalog Library

Data Catalog Vs Data Lake Catalog Library vrogue.co

3 Reasons Why You Need a Data Catalog for Data Warehouse

R2 Data Catalog Is A Managed Apache Iceberg ↗ Data Catalog Built Directly Into Your R2 Bucket.

Learn How Implementing A Data Catalog Can Solve These Problems.

A Data Catalog Is A Detailed Inventory That Can Help Data Professionals Quickly Find The Most Appropriate Data For Any Analytical Or Business Purpose.

It Exposes A Standard Iceberg Rest Catalog Interface, So You Can Connect The.

Related Post: